Q* Algorithm: Did OpenAI Find a New Breakthrough in AI?

In the wake of last week's dramatic events at OpenAI, several news outlets made further intriguing reports. In a previous article, I wrote that it appears the board of directors of OpenAI were alarmed at the possibility that unsafe AI technology would be unleased to the world. They wanted to fire Sam Altman, who favored rapid development followed by public dissemination, instead of a slower, more cautious approach.

Now some media outlets are reporting that OpenAI was working on a potentially powerful algorithm that can usher a direction toward building Artificial General Intelligence, if not Super Intelligence. The algorithm is allegedly called Q*. (Or Q-star)

Now the web is filled with theories on what Q* exactly is. In this article, I want to discuss the simplest explanation. (Following the spirit of Occam’s Razor, the simplest explanation probably is the most likely answer.)

Most experts suspect the “Q” part comes from Q-learning. Q-learning is a famous Reinforcement Learning algorithm. What is Reinforcement Learning?

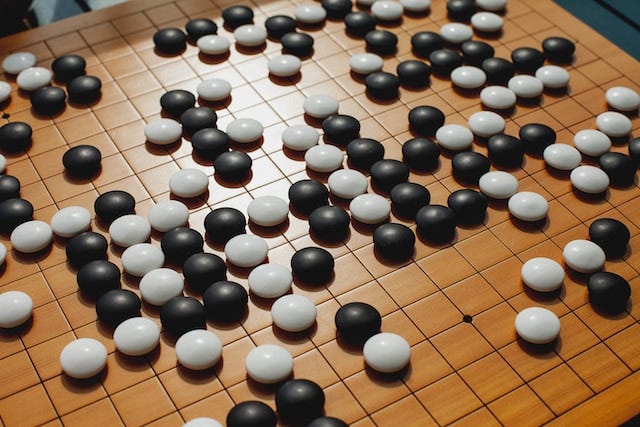

You may remember, long before ChatGPT made a splash, something called AlphaGO created a stir in the news circles. Let me remind you: Back in 2016, an AI program called AlphaGo defeated the world Go champion 4 out of 5 times. It proceeded to beat other human masters dozens more times without further losses—a record of 73-1.

Go (called Weiqi in Chinese) is a 3000-year-old game originally invented in China. It is played on a 19x19 grid. That makes Go orders of magnitudes more complex mathematically compared to chess, which is played on an 8x8 board. For this reason, many thought it was not possible for a computer to beat a human master in Go anytime soon.

Then AlphaGo by DeepMind came along. It was a deep learning neural network program trained using Reinforcement Algorithm. The idea of Reinforcement Algorithm is simple: The AI tries different moves in a game. The game tells the AI whether it wins or loses after the moves. The AI learns from its successes and mistakes. After many, many simulated games, the AI becomes much better even without human instructions. AlphaGo supposedly played simulated games of Go around ten million times until it reached its mastery.

The world was excited and terrified. Now we had an AI that can swat down the smartest humans like flies. It looked as if the age of Super Artificial Intelligence was upon us.

Then, Reinforcement Learning hit a brick wall and dropped off the cliff.

After 2016, groundbreaking AI news did not follow until OpenAI. DeepMind introduced an improved version of AlphaGo called AlphaGo Zero in 2017 that can beat AlphaGo. Did anyone care?

Sure, it could play Go, a game significantly more complex than chess. But the real world, unfortunately, is even more complex than Go. For Reinforcement Learning to work, you need to reduce the world to input vectors and output vectors. The vectors need to be relatively simple. Go is limited to 361 discrete slots for the playing pieces. Therefore, the entire world inside Go can be reduced to a vector of 361 elements. Each element can have just three states—a black piece, a white piece, or empty. The output move can take place only in one of those 361 spaces. Still the hardware required to run AlphaGo reportedly cost around 20 million dollars. It was not going to run on your laptop anytime soon.

When you think about how many words are in English or any other real world problems that are continuous—not discreet—in nature, it becomes apparent that the real world can’t be reduced to simple games. When the transformer powered LLMs entered the picture, it appeared that RL was not the right way to reach the human level intelligence.

Or maybe it is?

If OpenAI’s Q* is indeed based on Q-learning, or inspired by Q-learning (again, a form of Reinforcement Learning algorithm) then it could mean that OpenAI found a way to marry the power of LLM to the self-learning capability of the Q-learning algorithm.

This is significant because a LLM is NOT a self-learning machine. ChatGPT is not self-learning, and it is not learning at a geometric rate.

All LLMs, including ChatGPT have been trained on a large amount of human-annotated text data. It can’t go beyond the level of knowledge and creativity procured by humans. RL, on the other hand, provides a true self-learning machine. AlphaGo taught itself through playing millions of simulated games against itself. It eventually learned to be “creative,” learning to play the kind of moves that even human masters didn’t think of.

Okay, then what about the “*” part? Some experts suspect that it may be referring to the A* algorithm.

Did you ever wonder how your GPS device finds the shortest distance from A to B when there are hundreds and thousands of streets and countless combinations of the ways to traverse them? A* is a relatively straightforward AI path-finding algorithm. It is not a machine learning algorithm nor a deep learning algorithm. Variations of A* algorithm powers everything from your GPS navigation system, your robot vacuum cleaner, to the way characters move in video games.

A* is based on Djikstra’s Path Finding Algorithm invented in 1956. Thus it is hardly a new invention. A* improves Djikstra’s algorithm by adding “heuristics.” If you are driving from San Francisco to Los Angeles, it makes sense to be generally moving toward the south. A* tries to search the path going toward the south first before traversing all possible ways of moving.

A heuristic is a way of thinking that’s not guaranteed to give you the right solution, but generally a good way to approximate right solutions, or at least prune the solutions that are likely wrong. Occam’s Razor mentioned earlier is a form of heuristic. In fact, we humans rely heavily on heuristics every day, often a little too much.

One of the problems of Reinforcement Learning is that the AI is frequently burdened with too many choices and too many diverging paths. An AlphaGo-like AI at the beginning of the training process would make tons of stupid moves. It would literally take millions of simulated games until it becomes good at distinguishing bad moves from good moves. But real-life human masters do not (and cannot) play millions of games to become good at their crafts. They develop heuristics and intuition to quickly narrow down a set of acceptable moves.

If OpenAI developed not only a way to apply reinforcement learning to LLM, but also a way to make the process efficient and practical by heuristics-powered path finding, the name “Q*” makes sense. This might lead to the RL algorithm being applied to the generalized real-world problems, instead of games simulated inside computers. If so, we may have a truly self-learning, human-surpassing, world-changing AI in near future. This might have worried the board of directors of OpenAI enough to derail, or at least slow down, the developments at OpenAI.

Again, as of now, everything in this article is a little more than a conjecture, but a conjecture based on “heuristics.” Time will tell what comes out of this interesting event. We could be facing exciting possibilities, or dangerous challenges, if not both.