Google's Gemini AI: How Will It Compete with ChatGPT?

In this issue I would like to delve deeper into Gemini, which is Google’s current flagship AI product. While OpenAI has been a nearly undisputed leader in AI, I think Google can’t be counted out. Google still has its lead in its search, data and cloud infrastructure, not to mention a deep pocket.

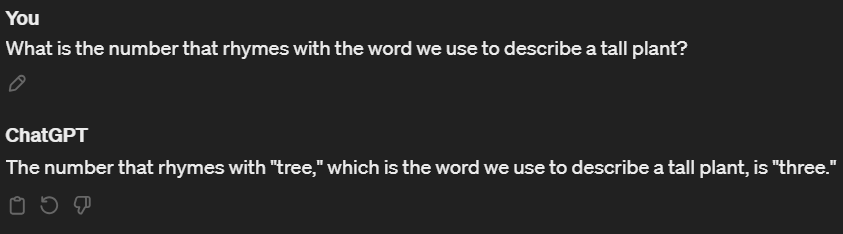

To illustrate the performance differences between the models, I ran a few experiments with a simple riddle.

ChatGPT4-Turbo (ChatGPT Pro)

Google’s Gemini Ultra: (Paid Version)

Gemini Pro: (Free Version)

What it is saying is not completely wrong. It just didn’t understand the point of the riddle or even the full meaning of the question.

ChatGPT3.5 (ChatGPT Free Versionn):

At least Gemini Pro didn’t completely hallucinate unlike ChatGPT3.5.

Despite the noticeable differences in quality, I believe the users of ChatGPT3.5, including those who use the free version of ChatGPT, now have a compelling alternative. Both ChatGPT3.5 and Gemini Pro are fit for lesser demanding tasks such as sentiment analysis, categorization, translation, and simple copywriting tasks, and cost significantly less and runs faster than ChatGPT4.

What is Google offering to application builders?

Unlike OpenAI, Google has a free tier for its Gemini API as a part of its cloud offering. An API is an important building block for applications, servers, mobile apps and other software we use. It allows you to build your own AI tools and custom LLM chains. To be accepted by the industry, Google needs to offer a compelling API. Now everyone with a Google Cloud account has access to its API. If you have a Google Cloud account, you can go to your account and enable the “Generative Language API.”

https://console.cloud.google.com/marketplace/product/google/generativelanguage.googleapis.com

Once you get your API Key, coding Python codes using Gemini API is pretty easy:

import google.generativeai as genai

genai.configure(api_key=GOOGLE_API_KEY)

model = genai.GenerativeModel('gemini-pro')

response = model.generate_content("What is the meaning of life?", stream=False)

print(response.candidates[0].content.parts[0].text)The output goes on for a while after the following text:

The meaning of life is a complex and subjective question that has been pondered by philosophers, scientists, and individuals throughout history. There is no single, universally accepted answer, as the meaning of life is unique to each person and can vary over time.

Some commonly cited perspectives on the meaning of life include:Price Competition with ChatGPT

The Gemini API’s free tier has a limit of 60 queries per minute, with no mention of limits on the number of tokens or characters. This means the free tier should be sufficient for personal, internal, and small-scale use cases. It won’t be sufficient for a large commercial application expecting hundreds of simultaneous users. So how does their paid tier (coming soon) compare to OpenAI?

Let me compare this with OpenAI’s API. OpenAI’s ChatGPT4-Turbo model charges about $0.01 for 1000 input tokens and $0.03 for 1000 output tokens. On average, one token translates to 4 characters. Using that metric, OpenAI’s ChatGPT4-Turbo model charges about $0.0025 for 1000 characters digested by the model and $0.0075 for 1000 characters generated by the model. For its older ChatGPT3.5-Turbo model, the numbers are $0.000125 and $0.000375.

Interestingly enough, that’s exactly same as what Gemini Pro will charge through its API.

So, it appears that Google intentionally decided to price-match ChatGPT3.5-Turbo with its Gemini Pro. Google seems to believe that this will convince the users of ChatGPT3.5-Turbo to switch to Gemini, as Gemini Pro is known to outperform ChatGPT3.5, but not ChatGPT4. Unlike OpenAI, Gemini API’s image vision pricing does not mention image sizes. That means processing image using Gemini would be cheaper than ChatGPT as long the images are larger than 512x512, as most images are.

Where is the API for the Ultra Version?

However, the biggest current problem of Gemini API is that the Gemini Ultra model, known to match the performance of ChatGPT4, is not offered with the API. You can only use Gemini Pro and Gemini Pro with Vision. I do not feel the Gemini API will be truly competitive against OpenAI until the Ultra version is available to the public. Hopefully this limitation will be removed soon. If the Ultra version is priced lower than ChatGPT4, I believe we will have a very interesting competition at hand, which should mean a win for the users.

As a side note, Flowise AI now has an option to use Gemini so you can build your own chatbot using Gemini instead of ChatGPT without coding. Again, if you remain in the free tier you won’t have to pay for API usage unlike OpenAI.

When Google releases Gemini Ultra for its API, we might have a more interesting rivalry at hand, as its Gemini Advanced chatbot already offers a sensible alternative to ChatGPT Pro. We will see how the race goes and hopefully it will be a win for the rest of us.