ChatGPT4 Finally Dethroned? Why Claude 3 Matters.

Since 2023, people have been hyping “ChatGPT killers.” Google Gemini was once hailed as a ChatGPT killer, as in this Gizmodo article.

Currently, the free version of Gemini outperforms the free version of ChatGPT. But if you have the Plus version of ChatGPT, you didn’t have strong reasons to switch to Gemini or other LLMs. . . until now. Claude 3 Opus, the latest and greatest large language model from Anthropic AI, finally does certain things noticeably better than ChatGPT.

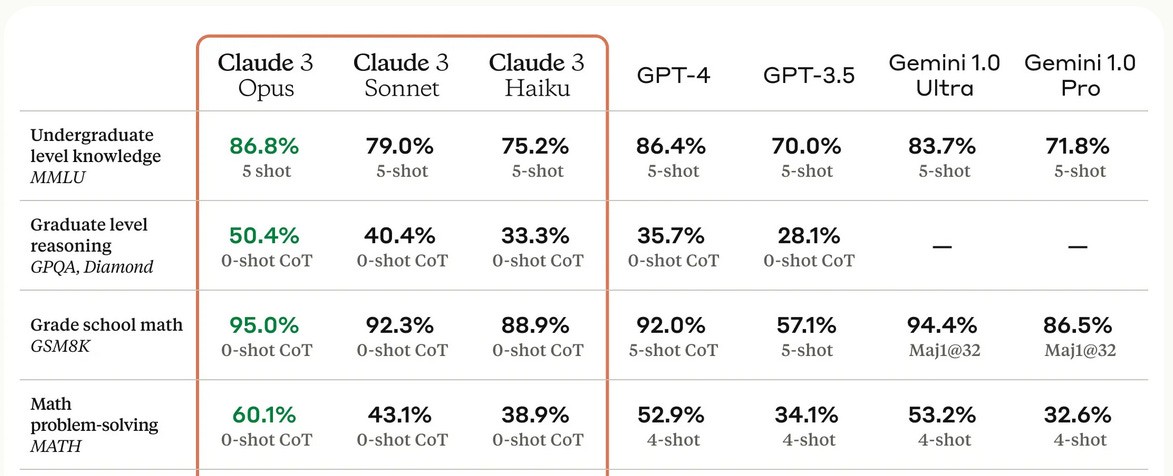

I want to reiterate that I try to stay away from promoting hypes and unfounded expectations. I won’t call Claude 3 a “ChatGPT killer.” Yet I think it’s the closest publicly available model to be worthy of the title. First of all, let’s take their own words and look at the results of the AI benchmarks. According to the test scores, Claude 3 Opus outperforms everyone including GPT-4 and Gemini Ultra.

These “benchmarks” are basically collections of questions for LLMs to answer, like the standardized tests you had to take during school years. But do those test score make differences in real life? Let’s forget about the numbers for now and try to answer an important question.

What does it matter to you, as a consumer?

From my own subjective experience, the answers given by Claude 3 Opus generally feel slightly more focused, more relevant, and more organized than ChatGPT4. However, such sentiment can be hard to quantify. If one answer feels less focused than another answer, it’s possible to argue that the former answer just gives a more broad and lateral views on the subject. Same with Claude 3’s vision capability. Anthropic touts that Claude 3’s vision capability outperforms ChatGPT4v. If there is a difference, it’s a subtle one. Both of them still fail to properly analyze Captcha grid images.

However, I found at least one important area where Claude 3 clearly outperforms ChatGPT4: dealing with large context information. In short, Claude 3 can actually read a book hundreds of pages long. ChatGPT4 falls way short.

Problem of Context Size

Context size in Language Models (LLMs), refers to the amount of text the model can consider at one time when generating answers. You can think of it as the chatbot’s short term memory. If the context size is too small, the chatbot won’t be able to recall the full conversation it had with you. It will be forced to drop information out of its context window when it tries to answer your latest question. That’s why when you start a new session, ChatGPT doesn’t remember the contents from previous sessions and starts new, so it doesn’t overburden its context window. (OpenAI recently introduced the idea of saving important information about you in a long-term memory space. Thus, it may remember your birthday for example.)

It’s very important to consider the context window size when you want your chatbot to digest a large amount of data to answer questions. You may have a book that’s hundreds of pages long, or a corporate knowledge base with a large amount of textual data. For a LLM to act truly intelligently, it must be able to digest new information it has not been trained with to answer questions.

ChatGPT progressively increased its published context window size. The problem is that as its context window size increased, the “dumber” it seems to get. With a large amount of text input, LLMs suffer from increased noise and “attention dilution.” If you ask a question based on a few pages of text, ChatGPT nails the answer. If you bury the answer in a hundred page documentation, ChatGPT simply can’t find it and doesn’t seem to be able to recall everything. This problem is also described as “a needle in the haystack” problem.

Claude 3 Seems to Have Solved the Needle in the Haystack Problem.

While Gemini Pro 1.5 also claims to have tackled the problem, Gemini Pro 1.5 is not available to the general public as of this writing. With Claude 3, everyone can try large documents right now and see the results.

For this test, I converted a SF novel titled Anvil of Stars by Greg Bear to a text file. While both ChatGPT4 and Claude 3 know about this novel, they don’t know how the book ends nor do they know fine details. I fed the contents of the book into both chatbots and asked a question that can only be answered by reading all the lines of the book: Who does Hans date? (Hans is a secondary character who serves as a quasi-antagonist to the main character of the novel. Who he dates is completely irrelevant to the main plot of the book.)

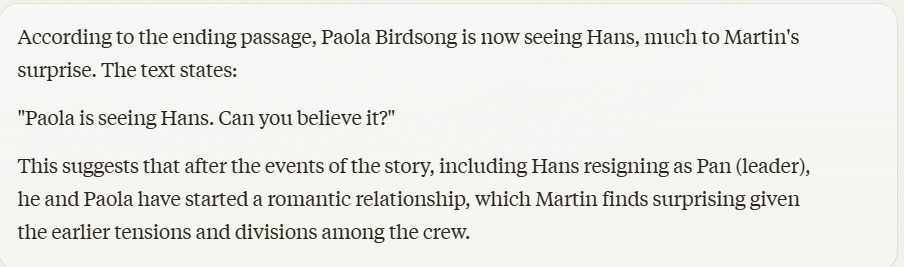

This is the response from ChatGPT Plus. (ChatGPT4)

This is Claude 3’s CORRECT response.

This is a needle in the haystack problem in the sense that the answer is never mentioned before or after that one sentence quoted by Claude 3.

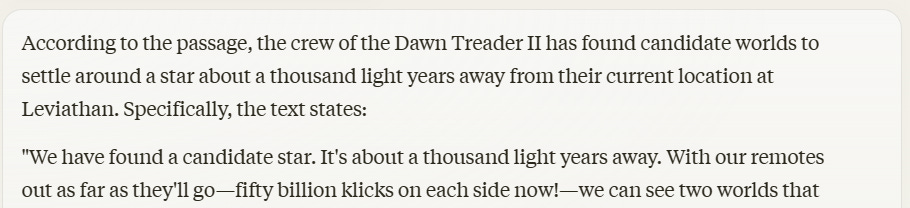

To make it a little easier for ChatGPT4, I asked a question that’s more relevant to the plot: “How far is their final destination?” The answer to the question can be found by reading the last few pages of the book. (When asked, a human will probably look at the last few pages to find the answer to the question. A common sense missed by ChatGPT4.)

Again, ChatGPT4 acts blind despite having access to the relevant text. On the other hand, Claude 3 scores with the accurate information:

Claude 3’s ability to ACTUALLY handle a large context input, unlike ChatGPT4 that tries and fails often, makes a major milestone. It can fundamentally change how the LLMs are used. For instance, it can be utilized to analyze vast amounts of customer feedback, reviews, and social media mentions to gain valuable insights into consumer sentiment, preferences, and trends. This information can then be used to inform product development, marketing strategies, and customer service initiatives. Claude 3 is shown to digest more data than ChatGPT4 and handle information with a better accuracy.

With advancement in large data handling, LLMs will continue to affect the way business, science, and other fields are conducted. Claude 3 may not actually “kill” ChatGPT, but a crack has been etched on its aura of invincibility.